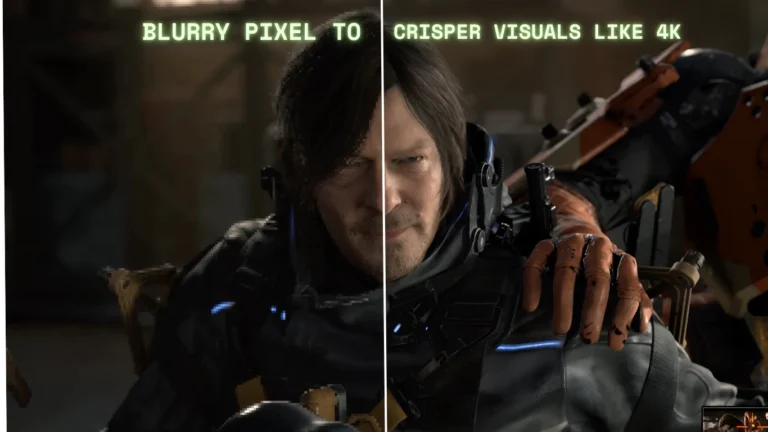

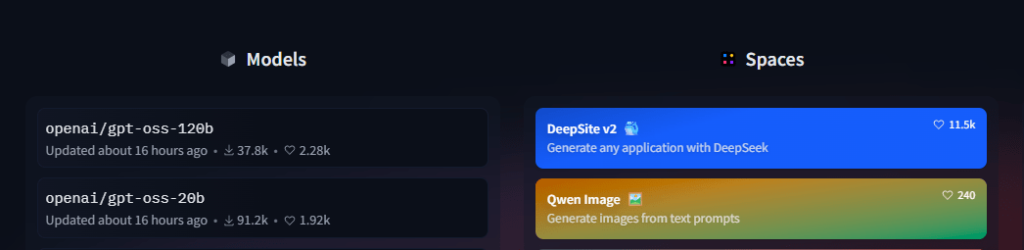

It finally happened. After five long years, OpenAI has returned to its roots with the release of two open-weight language models, gpt-oss-120b and gpt-oss-20b — and yes, they’re free to download and run locally. For devs, tinkerers, and researchers, this is a massive deal. These models aren’t just experimental tools — they’re powerful, production-ready LLMs licensed under Apache 2.0, with commercial use allowed.

Let’s break down what makes this such a big moment for OpenAI.

What are gpt-oss-120b and gpt-oss-20b?

These are text-only language models built using OpenAI’s latest training techniques, including chain-of-thought reasoning — a method where the AI breaks down complex problems into steps before answering. The models are:

- gpt-oss-120b: A heavyweight rival to proprietary models like o3 and o4-mini, often outperforming them in key benchmarks.

- gpt-oss-20b: A smaller, 16GB VRAM-friendly model designed to run locally on consumer-grade PCs and laptops.

Where to download gpt-oss-120b and gpt-oss-20b?

And the best part? They’re downloadable right now on Hugging Face.

What’s an Open-Weight Model?

Unlike proprietary AI tools like ChatGPT, open-weight models let you inspect, fine-tune, and customize the internal parameters of the model. This transparency makes them:

- Ideal for research, local deployment, or AI agents

- Usable offline, behind firewalls, or in sensitive environments

- Modifiable for specialized domains or tasks

Think of it like getting the source code for GPT — not just the API.

Why Is OpenAI Doing This Now?

OpenAI’s CEO, Sam Altman, says this release is part of a bigger push to make AI more accessible:

“We’re excited to make this model, the result of billions of dollars of research, available to the world to get AI into the hands of the most people possible.”

It’s a shift from OpenAI’s more closed-off strategy in recent years, and a direct response to rising competition from Meta, Mistral, and Chinese open-weight efforts like DeepSeek.

How Good Are These Models, Really?

During a press briefing, OpenAI researcher Chris Koch said the 120b model is on par with their o3 and o4-mini models — and sometimes even better. Both gpt-oss models were evaluated for benchmarks, latency, and safety, with internal testing showing low risk for misuse based on OpenAI’s preparedness framework.

Key performance highlights:

- Strong benchmark scores

- Lower latency

- Cheaper to run than proprietary models

If you’re a dev running local inference or building custom AI agents, this opens doors that were previously locked behind paywalls or closed APIs.

What Makes This Different From ChatGPT?

Here’s what sets gpt-oss models apart from ChatGPT:

| Feature | gpt-oss | ChatGPT |

|---|---|---|

| Open Weights | ✅ | ❌ |

| Offline Support | ✅ | ❌ |

| Custom Fine-Tuning | ✅ | ❌ |

| Multimodal Support | ❌ | ✅ (for GPT-4o) |

| Free Use (Apache 2.0) | ✅ | ❌ (paid plans required) |

If you’re a developer or researcher who values customization and local control, the gpt-oss models are tailored for you.

Available Under Apache 2.0 — What That Means

Both models are released under the Apache 2.0 license, meaning you can:

- Use them commercially

- Modify and redistribute them

- Integrate them into licensed products

This is the same license used by Meta’s Llama, Qwen, and Mistral models — signaling a growing push for open AI infrastructure across the board.

What About Safety Risks?

Releasing powerful models openly comes with risks, and OpenAI isn’t pretending otherwise. Safety researcher Eric Wallace shared that they:

- Fine-tuned the models internally to simulate potential misuse

- Stress-tested them to see how far they could be pushed

- Found that risks didn’t exceed thresholds

Still, the team delayed the initial launch (announced in March 2025) to do extra safety checks — a cautious but necessary move in today’s AI climate.

How It Impacts the AI Arms Race

This move is also a strategic play in the ongoing AI talent and model war between giants like OpenAI, Meta, and rising Chinese players.

Meta has been dominating open-weight headlines since releasing Llama 2 and 3, and is now focused on “superintelligence” with Llama 4 and its new internal lab led by Alexandr Wang (ex-Scale AI). Rumors hint that Meta might ditch open models altogether due to safety fears.

That gives OpenAI a chance to lead the open-weight space in the U.S., and Sam Altman is making it political:

“We are excited for the world to be building on an open AI stack created in the United States, based on democratic values.”

Translation: OpenAI wants to beat Meta and Chinese firms by winning developer hearts and minds.

Final Thoughts — Why This Matters

This isn’t just another model release — it’s a return to OpenAI’s original vision: open tools that empower developers, researchers, and businesses. With solid performance, local deployment, and a permissive license, gpt-oss-120b and gpt-oss-20b offer serious alternatives to proprietary APIs.

If you’re someone who wants to own your AI stack and customize it for your world, this is the most exciting release of 2025.

🔥 TL;DR

- OpenAI just dropped two open-weight models: gpt-oss-120b and gpt-oss-20b

- They’re free, Apache 2.0 licensed, and run locally

- Performance is comparable to GPT-4 mini (o4-mini)

- You can fine-tune, inspect, and deploy offline

- This signals a new era of open, accessible AI from OpenAI